Wireless First: A Winning Strategy for Rural Broadband

The FCC has announced the initial group of members of its Broadband Deployment Advisory Committee and set the first meeting for April 21st. This signals the agency’s seriousness about doing something significant about the state of rural broadband in the US. The composition of the group, as well as the remarks Chairman Pai has made about it, suggest that it will do more than simply write checks to build random new networks and to subsidize day-to-day operations in those already running.

Broadband subsidies are chiefly financed by the Universal Service Fund – typically $8 billion per year – as well as various other programs such as the $500 million/year Mobility Fund, the USDA’s $3.4 billion Broadband Initiatives Program, and NTIA’s $3.5 billion Broadband Technology Opportunities Program. These programs add up to some serious money, but they haven’t typically had much direction; BIP in particular appears to be a waste of money.

Broadband Everywhere

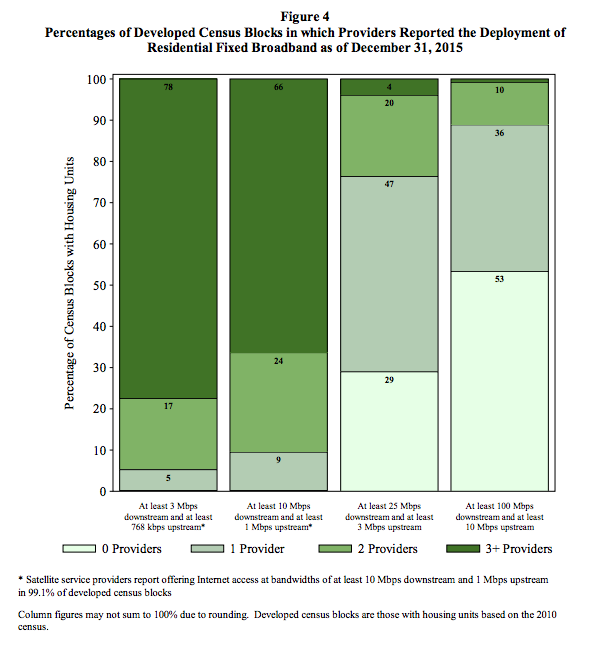

According to the FCC’s competition graph, most of the US – 99.1% of the population – already has access to broadband at 10 Mbps down/1 Mbps up. That’s sufficient to enjoy everything the Internet has to offer except for high-rate video conferencing. So the discussion is about quality and competition rather than simple coverage.

The Wheeler FCC created an apparent competition crisis by changing the definition of broadband from 10 to 25 Mbps down and from 1 to 3 Mbps up. This removed several perfectly capable networks from the mix, such as VDSL2 networks running at 20 – 24 Mbps and satellite options. There are two satellite providers offering service at > 10 Mbps, Viasat and Echostar, so their presence in the competition charts would have meant that virtually all of the US had competition as well as coverage.

While we can argue that satellite isn’t a perfect substitute for wired or terrestrial wireless, it’s perfectly adequate for ordinary web surfing and for many IoT applications. It’s certainly not appropriate to settle for satellite as a permanent solution because its high latencies make it a bad choice for voice and video conferencing. But the existence of the satellite alternative means that the rural coverage issue isn’t so urgent that we need to spend lots of money on short-term bandaids.

The FCC data also indicates that 99.1% of the US also has a non-satellite player in the mix at 10 Mbps, so the most rural parts of the US that still have people are served by three carriers. One might well conclude that broadband coverage is pretty darn good.

Complicating Factors

But it’s not that simple. The FCC data are collected on a census tract basis, and we know that census tracts in rural areas aren’t uniformly served by terrestrial carriers. There are certainly a raft of anecdotal complaints from people who insist satellite won’t work for them because of trees. And cable modem service only extends to 93% of households. Hence, six percent of census tracts reach the 99.1% threshold because of terrestrial wireless options, chiefly LTE and Wi-Fi.

Terrestrial wireless systems have a bit more latency than wired networks, but much less than satellite. Typical latency is in the range of 30 ms or so for wired, 100 ms for LTE, and 600 ms for satellite. 5G is planned to reduce latency for wireless connections, but it’s ultimately bounded by signal propagation times that can’t beat the speed of light.

That’s OK because the most demanding network applications will work well up to about 200 ms of latency: voice and video conferencing. Extremely low latencies – 50 ms or so – are preferred by gamers playing shoot ’em up games, but that’s a fringe use case.

What this all means is that wired connections will probably never reach more than 95% of American homes, farms, and offices, but that’s going to be OK because most of the rest will be connected by low-latency wireless.

Design Choices

While the discussion about broadband quality focuses on raw bandwidth, latency becomes a critical factor when bandwidth reaches or exceeds 10 Mbps. Increasing bandwidth from 10 to 20 Mbps has little or no perceptible impact on web page load time, but lower latency makes for perceptible improvements in many cases because TCP – the most common Internet transport protocol – limits its own bandwidth. Lower latency allows TCP to ramp up to full capacity faster.

And as we’ve seen, extremely high latencies make some non-web apps hard or impossible to run. So any exercise on goal-setting has to take both bandwidth and latency into account. And rather than setting arbitrary targets for these two dimensions of broadband quality, we should consult applications for their requirements.

If we want to ensure that rural broadband is good enough for country folk to enjoy the same benefits that city people gain from their Internet connections, we don’t have to match the networks number for number. It’s sufficient to have rural networks capable of running the same applications used by urbanites. This is an important distinction because most of the capacity in networks with hundreds of megabits per second capacity goes to waste most of the time.

Data limits also limit consumer ability to use some applications. Video streaming consumes 1 – 4 GB per hour, so plans capped at less than 100 GB/month mean that the Internet won’t be more than a complement to cable or satellite TV networks. But satellite broadband is often a complementary service to satellite TV, so access to one implies access to the other.

Bang for the Buck

In a perfect world, we might well want to see fiber optic cable running to every home, but in this world that’s an expensive proposition. ITIF’s Doug Brake points shows just how costly:

Paul de Sa, former chief of the FCC’s Office of Strategic Planning and Policy Analysis, put out a paper arguing that the a goal of federal broadband infrastructure policy “should be to increase and accelerate profitable, incremental private-sector investment to achieve at least 98 percent nationwide deployment of future-proofed, fixed broadband networks.” Using the FCC’s cost models, de Sa says this increase of four percentage points can be achieved for about $40 billion. Achieving the last two percent—going from 98 percent to 100 percent—would double the cost.

In de Sa’s model, “future proofed” networks are either fiber or coaxial cable. The $40B price tag for 98% coverage would exhaust the USF for five years, assuming no provider received any operations support, which is not the way USF works. Increasing the goal to 100% coverage means 10 years with nothing but new builds, an altogether unrealistic scenario.

An alternative would be to target 100% coverage with LTE with high data caps (or no caps at all). Because we’re already at 99%, we can reach the goal much sooner and with much less investment. The buildout part would consist of towers and backhaul; in many areas the backhaul can also be wireless.

Over time, we can forecast that fiber would replace wireless backhaul, ultimately reaching beyond initial tower locations to a new network of small cells for 5G. Because of its flexibility and economic virtues, 5G is probably the new normal for residential broadband. With less dependence on wire, 5G will be more reliable and cheaper to upgrade.

Moore’s Law and Broadband

Today’s networks are more like information systems that were the telecom networks of the past. Improvements in broadband speed, latency, and reliability can come about from better chips, better software, and better system planning.

The nice thing about focusing on wireless for the final leg of the extended broadband system is that it doesn’t duplicate effort or waste money. Despite the glory of fiber optic networks, people want mobility. So wireless is going to be part of the solution regardless. Why don’t we just accept that and concentrate on building the best wireless networks first and fill in with fiber only when and where it’s truly needed?

Finally, it’s worth noting that the market has already gravitate toward Wireless First. It just makes sense.