Trouble in Fibertown

When faced with the need to either stagnate or grow, Novell chose the status quo path. Let’s hope Orem doesn’t repeat the error with UTOPIA. It might have been a great idea in 2002, but the visions many of us had of networking in those days were blind to the progress that was possible for wireless. That was a serious miscalculation.

2018 Broadband Deployment Report

After flirting with some major restructuring in the way broadband progress is assessed in the US, Chairman Pai has released a fact sheet that maintains the analytical status quo with…

Tech Policy Tribalism

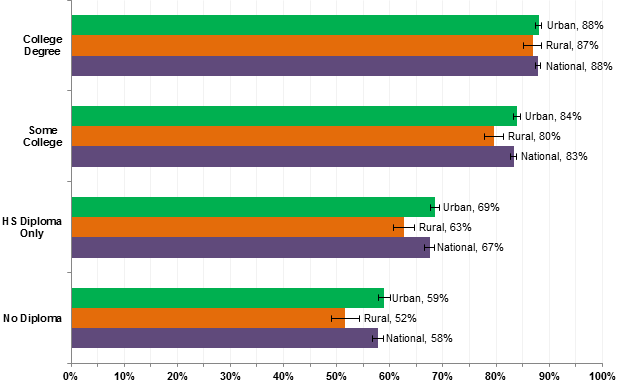

The tribal forces of the left appear to be forming a drum circle around the idea that rural broadband is entirely screwed up in the US so we need to create thousands of broadband co-ops to solve it the problem in a few decades. I think we can do a lot better, but only if we can forget about the tribal identities and apply some reasoning informed by facts.

My FCC Comments on Broadband Progress

Here’s the summary of the comments I filed with the FCC on its broadband deployment report to Congress. A lot of the ink in the mainstream media today echoes a…

Progress in the Debate over TV White Space

Tuesday (July 11, 2017), Microsoft unveiled their current vision for unlicensed radio services in the TV White Space (see “Microsoft calls for U.S. strategy to eliminate rural broadband gap within…

Microsoft Closes Digital Divide! Heh, Just Kidding

Happy Prime Day! Here’s one special deal you don’t want to buy: Microsoft’s grand plan to bring high speed broadband to the less-populated fringe of rural America for peanuts. It…

Remind Me: Why Should I Care about Net Neutrality?

End-to-end is part of Internet history, but so is traffic differentiation. On the one hand, some forms of discrimination at the packet level are constructive. Applications have different needs and it’s good for networks to provide them with the type of service they desire.

American Broadband Policy: Information over Manipulation

While it’s true that Americans aren’t dancing in the streets over ISP customer service, it’s unrealistic to claim that replacing them with municipal utility providers will change this dynamic at all.

Voluntary Net Neutrality: Holy Grail or Total Hoax?

If net neutrality is what its supporters say it is – the best overall way of setting expectations and managing Internet service agreements, it should be expected to become self-executing at some point. I think we passed that point about ten years ago, but we will see what we will see.

Wireless First: A Winning Strategy for Rural Broadband

The nice thing about focusing on wireless for the final leg of the extended broadband system is that it doesn’t duplicate effort or waste money. Despite the glory of fiber optic networks, people want mobility. So wireless is going to be part of the solution regardless. Why don’t we just accept that and concentrate on building the best wireless networks first and fill in with fiber only when and where it’s truly needed?